Most APIs return inconsistent data.

The same field might be:

- a string in one response

- a number in another

null, empty, or missing in others

This breaks validation, analytics, exports, and automation workflows. In this guide, you will learn how to normalize inconsistent API fields into predictable JSON using a repeatable JSON pipeline. If the response also contains noisy fields, start by learning how to clean API responses before normalization.

Used in analytics pipelines, ETL workflows, and API integrations, normalization keeps messy JSON usable across systems.

What is API field normalization?

API field normalization is the process of converting inconsistent API values into a predictable, stable format so downstream systems can reliably process the data.

It is one part of API data cleanup: first remove noisy values, then normalize JSON data into consistent types, and finally validate the cleaned output before it reaches analytics, storage, or automation.

Normalize API fields in 3 steps

- Clean the raw API response

- Normalize field types and values

- Validate the final structure

This ensures consistent, reusable JSON for downstream systems.

Why inconsistent API fields are hard to use

- Numbers may arrive as strings, like

"29.99"instead of29.99 - Empty values may appear as

null,"","N/A", or missing fields - Booleans may appear as

true,"true","yes", or1 - The same field may change shape between API responses

To make API data usable, normalize those fields before storing, validating, exporting, or sending them downstream.

Try it with your API data

Paste your API response and normalize it instantly:

Normalize your API response in 10 seconds ->

No setup. No code. Reusable workflow.

How to normalize inconsistent API fields

To normalize inconsistent API fields:

- Paste the API response into the editor.

- Identify fields with mixed types or unstable empty values.

- Convert each field into one expected format.

- Preview the cleaned output and reuse the workflow.

The goal is not just prettier JSON. The goal is a stable contract: price is always a number or null, subscribed is always a boolean, and empty values follow one rule instead of several.

How to normalize JSON data from APIs

Normalizing JSON data means converting inconsistent field types, values, and structures into a stable format before using the data in applications, analytics, or automation workflows.

For an API response, this usually means fixing inconsistent JSON at the field level first: numbers become numbers, booleans become booleans, empty values follow one rule, and nested objects use a predictable shape. A JSON normalization pipeline is the most reliable way to standardize API data across systems because the same rules can run every time the endpoint returns new data.

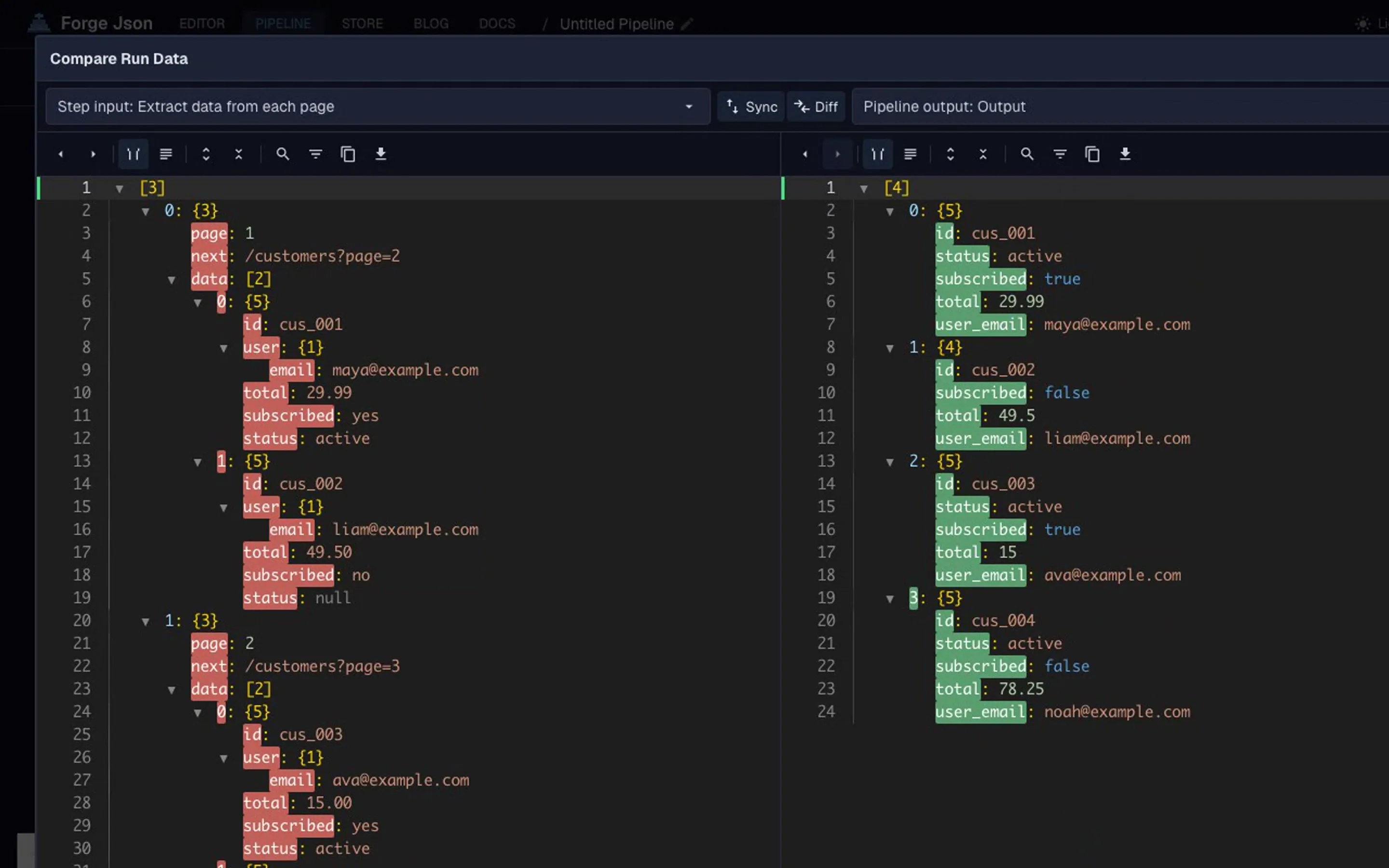

The normalization pipeline

This workflow uses 3 steps:

- Convert string numbers to numbers (

age,price) using format-values - Map inconsistent values to standard values (

"yes"totrue,nullto"unknown", empty fields removed) using map-values - Clean remaining noise (trim whitespace, drop dead fields) using clean-json

The combination handles both type consistency and structural cleanup. Each step has a single job, which keeps the workflow easy to audit and rerun.

Clean JSON is the cleanup step in the pipeline. Combine it with format-values and map-values for full normalization.

Common inconsistent API field formats

| Type | Example | Notes |

|---|---|---|

| String number | "42" | Should often become 42 |

| Empty value | "", "N/A", null | Should become one consistent empty state |

| Boolean-like value | "true", "yes", 1 | Should become true or false |

| Mixed date value | "2026-04-01", "", null | Should become a valid date string or null |

These formats usually appear when data comes from multiple services, older API versions, user-generated forms, or third-party integrations. Without a repeatable workflow, they turn into manual cleanup, one-off scripts, and fragile import logic.

For the underlying JSON data types and parsing behavior, MDN's JSON reference is a useful technical background.

API field normalization examples

Real-world normalization usually starts with a clear downstream use case:

- Ecommerce orders: convert

total,tax, andshippingfrom string numbers into real numbers before revenue reporting. - Webhook payloads: convert

"yes","true",1, andtrueinto one boolean format before automation rules run. - Analytics pipelines: replace

"","N/A", and missing values withnullso dashboards do not split the same meaning into separate buckets. - Third-party integrations: fix inconsistent JSON from different vendors before merging records into one customer, product, or order model.

- Nested JSON responses: normalize

customer.id,customer.email, anditems[].pricebefore flattening the response for CSV or analytics.

These cases all use the same pattern: define the expected field shape, normalize JSON data into that shape, then pass the result into a JSON transformation pipeline for validation, export, or storage.